Summary (TL/DR): Once data is fetched, efficient processing becomes key. Learn how incremental syncs and checkpointing help scale pipelines, reduce load, and prevent duplicate work.

Part II: Data Processing and Pipeline Optimization

Once you’ve successfully fetched the data, the work isn’t done. How you process and manage that data can make or break the performance, scalability, and reliability of your job stream.

When dealing with high-volume or frequently updated data sources, fetching everything every time just doesn’t scale. It’s slow, inefficient, and can easily overwhelm both your system and the external APIs you’re pulling from.

Why Incremental Updates and Checkpointing Matter

Instead of pulling all the data every run, we use incremental updates—grabbing only the new or changed records since the last successful sync. This is often paired with a checkpointing system, which stores the last known state (like a timestamp or record ID) and uses it to resume fetching from that point forward.

This approach has several key benefits:

- Reduces API load – fewer calls, less chance of hitting rate limits

- Speeds up processing – you’re only handling what’s changed

- Enables reliable retries – if something fails, you can pick up where you left off

- Scales easily – works well as datasets grow over time

In my project, pagination and incremental sync were critical. We were ingesting data from large APIs, some of which had strict rate limits and batch size caps. By combining page-by-page fetching with checkpoint logic, we ensured scalable data ingestion without overwhelming the APIs—or our processing systems.

What This Looks Like in Practice

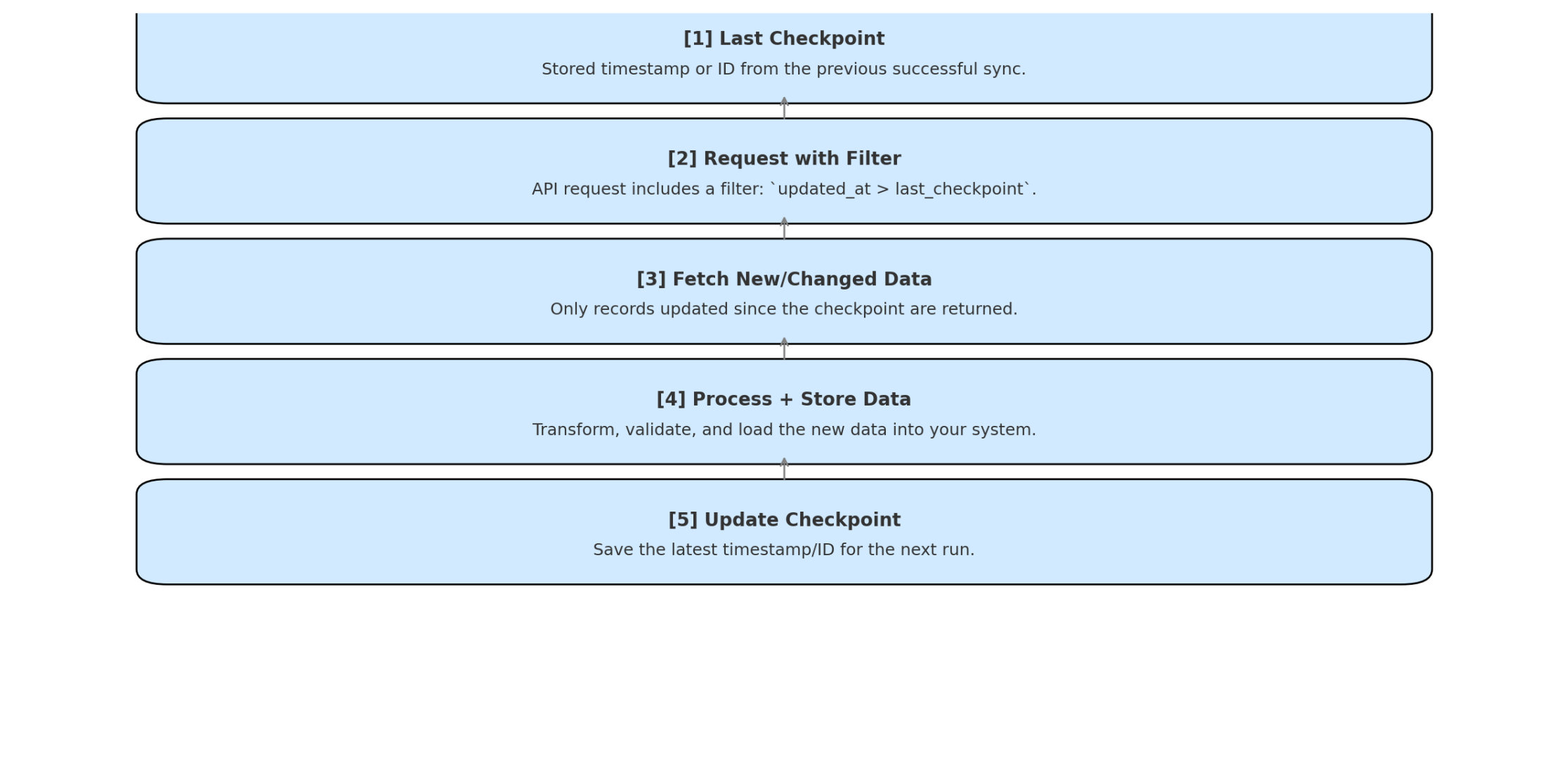

To visualize how this works in practice, here’s a simplified diagram of the incremental sync process—from storing a checkpoint to fetching and processing only new or updated data:

Here’s a simplified example:

- You store a last_updated timestamp after each sync.

- On the next run, your connector fetches only records where updated_at > last_updated.

- You process and load that data, then update the checkpoint again.

Done right, this pattern upgrades your job stream from a blunt batch job into a precise, efficient system—fast, reliable, and respectful of external resources.

With our data flowing efficiently, the next concern was ensuring it flowed securely—without compromising secrets or exposing sensitive credentials.

This article was written by Andrea Kuruppu, our superstar Data Engineer 🌟 Since we cover a lot of concepts here, we divided it into parts:

- Part I: The basics – Fetching the Data

- Part II: Data Processing and Pipeline Optimization

- Part III: Security – Managing Secrets and Authentication with a Proxy API

- Part IV: Version Control and Collaboration

- Part V: Testing, Debugging, and Deployment

- Part VI: Closing Thoughts – Challenges and Best Practices

- Part VII: Summary