Summary (TL/DR): Pipelines break—often due to changing data, not faulty code. This section covers how to use tests, CI/CD, and logging to build confidence in deployments and catch issues early.

In data engineering, your pipeline might run perfectly one day and break the next—not because of your code, but because the data changed, the API response format shifted, or a dependency failed silently.

That’s why testing and debugging aren’t optional—they’re essential for building pipelines that are reliable, predictable, and production-ready.

Why Automated Tests Matter

Just like in software development, automated testing in data workflows gives you a safety net. It helps answer critical questions like:

- Are we fetching what we expect?

- Did the schema change in a breaking way?

- Are we still catching and logging errors correctly?

- Is this transformation producing the right shape of output?

Without tests, a small unnoticed change upstream can cause silent data corruption—which might only surface after stakeholders question a dashboard or a downstream process breaks.

How We Approached Testing in Our Pipelines

In our project, we wrote and maintained automated tests for:

- Connector logic – making sure requests were built correctly, and that error cases (timeouts, auth failures) were handled

- Schema validation – comparing sample API responses against expected field structures

- Transformation logic – testing edge cases like missing values, nulls, and incorrect types

- End-to-end pipeline checks – ensuring records moved from source to target as expected

We ran these tests as part of our CI/CD pipeline, so every pull request was automatically checked before merging. This caught issues early, and gave us confidence in each deployment.

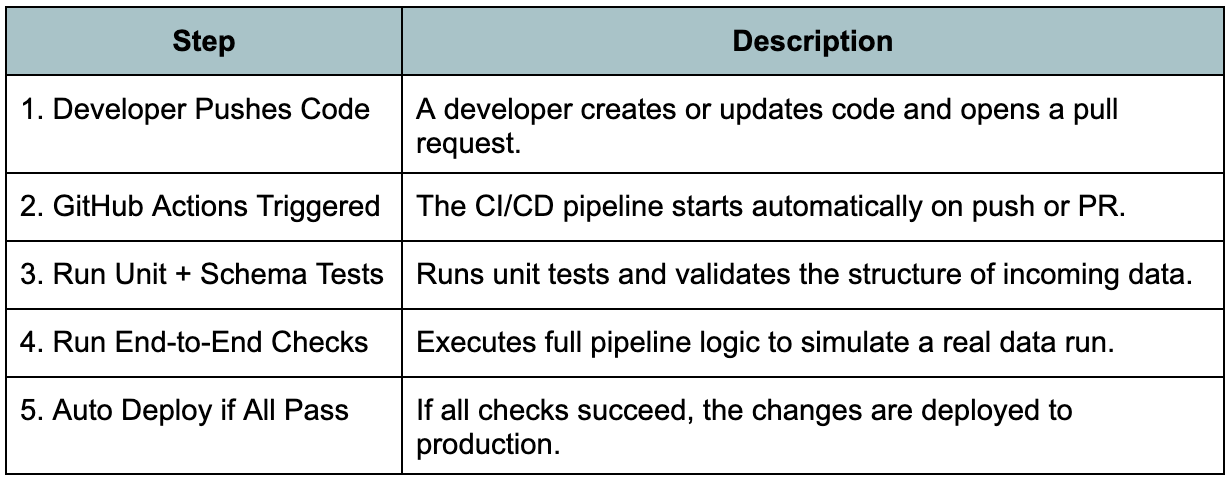

We used GitHub Actions to automate our testing and deployment workflows. Every pull request triggered a pipeline that ran our test suite—checking request logic, schema validation, and transformation correctness before any code was merged. This helped us catch issues early and ensured that only verified changes made it to production. It also gave our team confidence to iterate quickly without breaking things.

Here is the GitHub Actions CI/CD workflow:

Debugging Failures in Real Time

Despite testing, things still go wrong. That’s why we invested time into:

- Meaningful logging – clear messages at each step helped us trace issues fast

- Alerting on failed syncs – Slack or email notifications when a job failed

- Replay-safe design – the ability to re-run only the failed portion of a pipeline without reprocessing everything

One lesson we learned the hard way: just because a pipeline runs, doesn’t mean the data is good. Debugging required both engineering skill and an understanding of the data itself.

Deploying with Confidence

Deployments were managed through pull requests, with CI checks acting as our gatekeeper. For larger changes, we used:

- Staging environments – to test with real but non-critical data

- Feature flags – to roll out risky logic gradually

- Tag-based releases – to track which version was live in production

This structure helped us ship updates frequently without fear of breaking things.

In Summary

Testing and debugging in data flows go beyond writing unit tests—they’re about catching data issues, validating assumptions, and building systems that recover gracefully when things go wrong.

Next, we’ll explore the practical challenges that crop up in production-grade projects—like handling flaky APIs, rate limits, and changing schemas—and how to build around them.

This article was written by Andrea Kuruppu, our superstar Data Engineer 🌟 Since we cover a lot of concepts here, we divided it into parts:

- Part I: The basics – Fetching the Data

- Part II: Data Processing and Pipeline Optimization

- Part III: Security – Managing Secrets and Authentication with a Proxy API

- Part IV: Version Control and Collaboration

- Part V: Testing, Debugging, and Deployment

- Part VI: Closing Thoughts – Challenges and Best Practices

- Part VII: Summary