Summary (TL/DR): Hardcoding secrets is a security risk. This section explains how to use a proxy API and cloud tools like Secret Manager to secure tokens and centralize authentication logic.

When working with production-grade data flows, security isn’t optional—especially when managing API tokens, OAuth credentials, or other sensitive client information.

In our project, we implemented a proxy API pattern to handle sensitive authentication logic and abstract away direct API access from the core system logic.

Why Use a Proxy API?

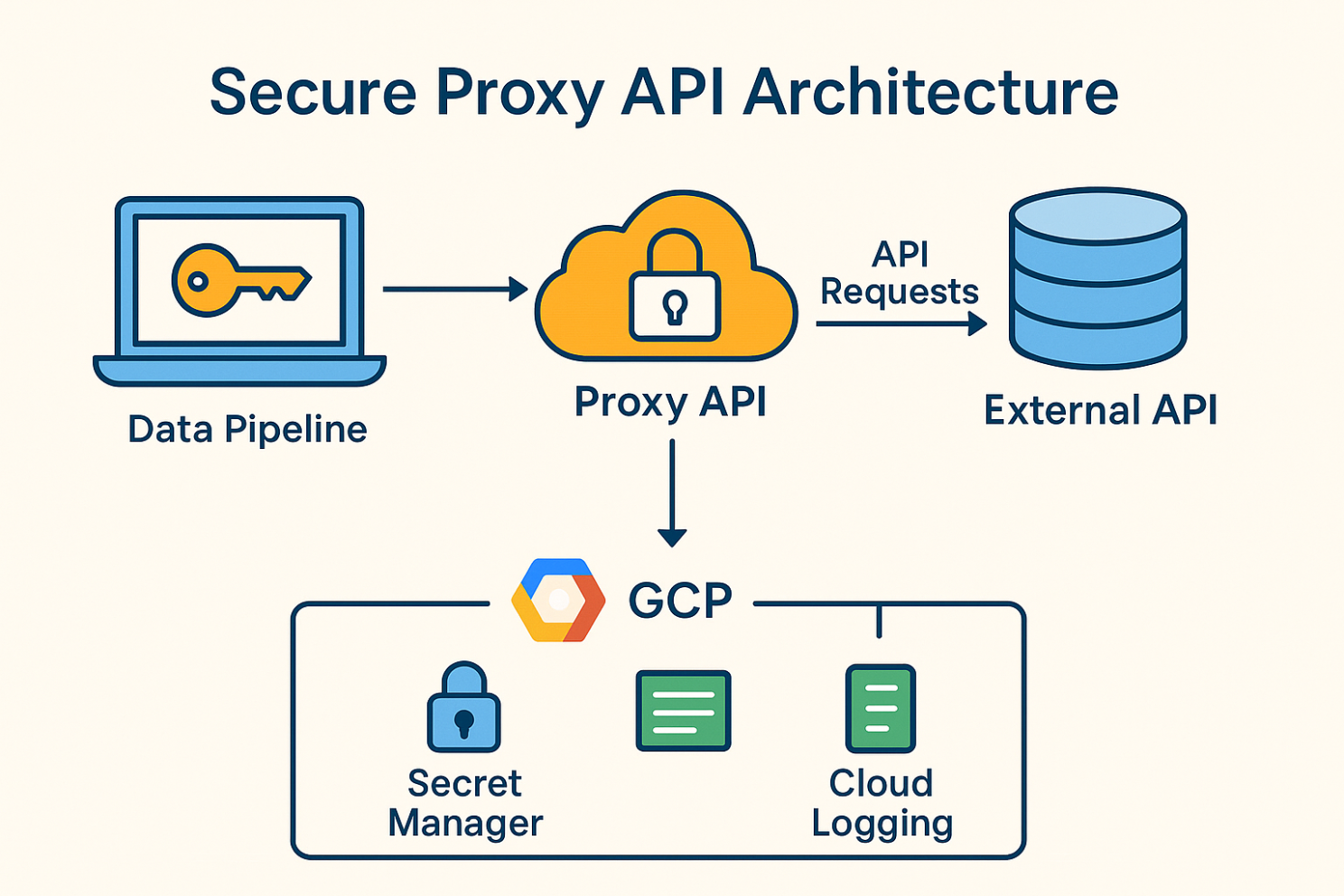

Instead of embedding API keys or secrets directly in your job stream code, a proxy server sits between your data flow and the external service.

This offers several advantages:

- Credential protection – Secrets are stored securely in a backend (like Secret Manager) and are never exposed in plaintext to the pipeline tasks. This keeps sensitive data like API keys or tokens safe during execution.

- Centralized logic – Authentication, token refresh, and throttling can be managed in one place

- Auditability – Requests and access times can be logged cleanly for tracking and compliance

- Scalable access – Multiple data flows or users can authenticate via the same proxy with scoped permissions

Our Cloud Setup (GCP Example)

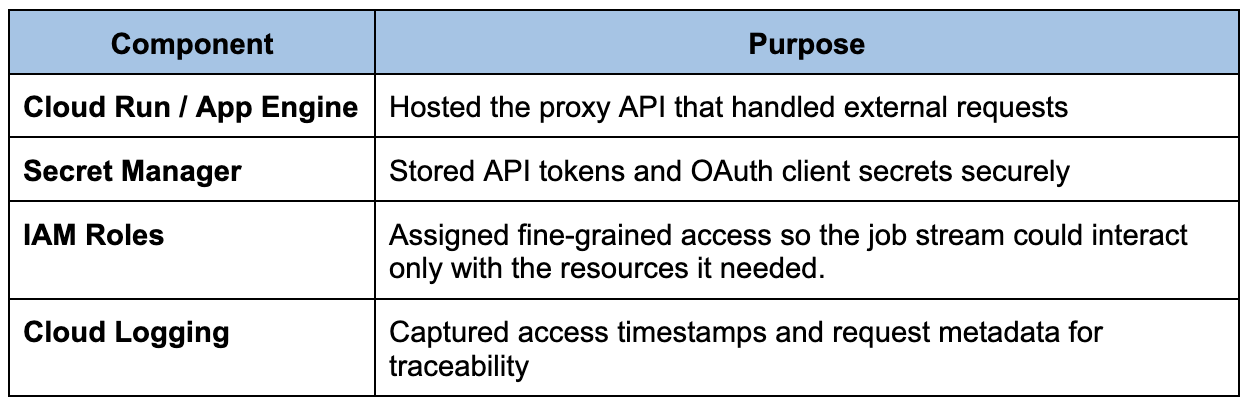

We used Google Cloud Platform to host the proxy and secure the secrets. Here’s a simplified breakdown of how we set it up:

With this setup, our workflow only needed to call the proxy with minimal configuration. The proxy handled authentication, rate limits, and error responses, and logged every request for monitoring.

Best Practices We Followed

- Never hard-coded secrets into the pipeline codebase

- Used service accounts with limited scopes

- Logged only non-sensitive metadata to avoid exposing tokens

- Rotated credentials regularly using Secret Manager versioning

This architecture helped us balance security, observability, and maintainability—key pillars for any production-ready data system.

To visualize this setup, here’s a simplified architecture showing how we used a proxy API with GCP services like Secret Manager and Cloud Logging to secure and monitor API access:

In the next section, we’ll shift focus to the teamwork side of data engineering—version control, collaboration, and how we worked together to keep things stable as our job stream evolved.

This article was written by Andrea Alvarado Quinteros, our superstar Data Engineer 🌟 Since we cover a lot of concepts here, we divided it into parts:

- Part I: The basics – Fetching the Data

- Part II: Data Processing and Pipeline Optimization

- Part III: Security – Managing Secrets and Authentication with a Proxy API

- Part IV: Version Control and Collaboration

- Part V: Testing, Debugging, and Deployment

- Part VI: Closing Thoughts – Challenges and Best Practices

- Part VII: Summary